Plus, receive recommendations and exclusive offers on all of your favorite books and authors from Simon & Schuster.

Table of Contents

About The Book

A Publishers Weekly Best Book of 2014

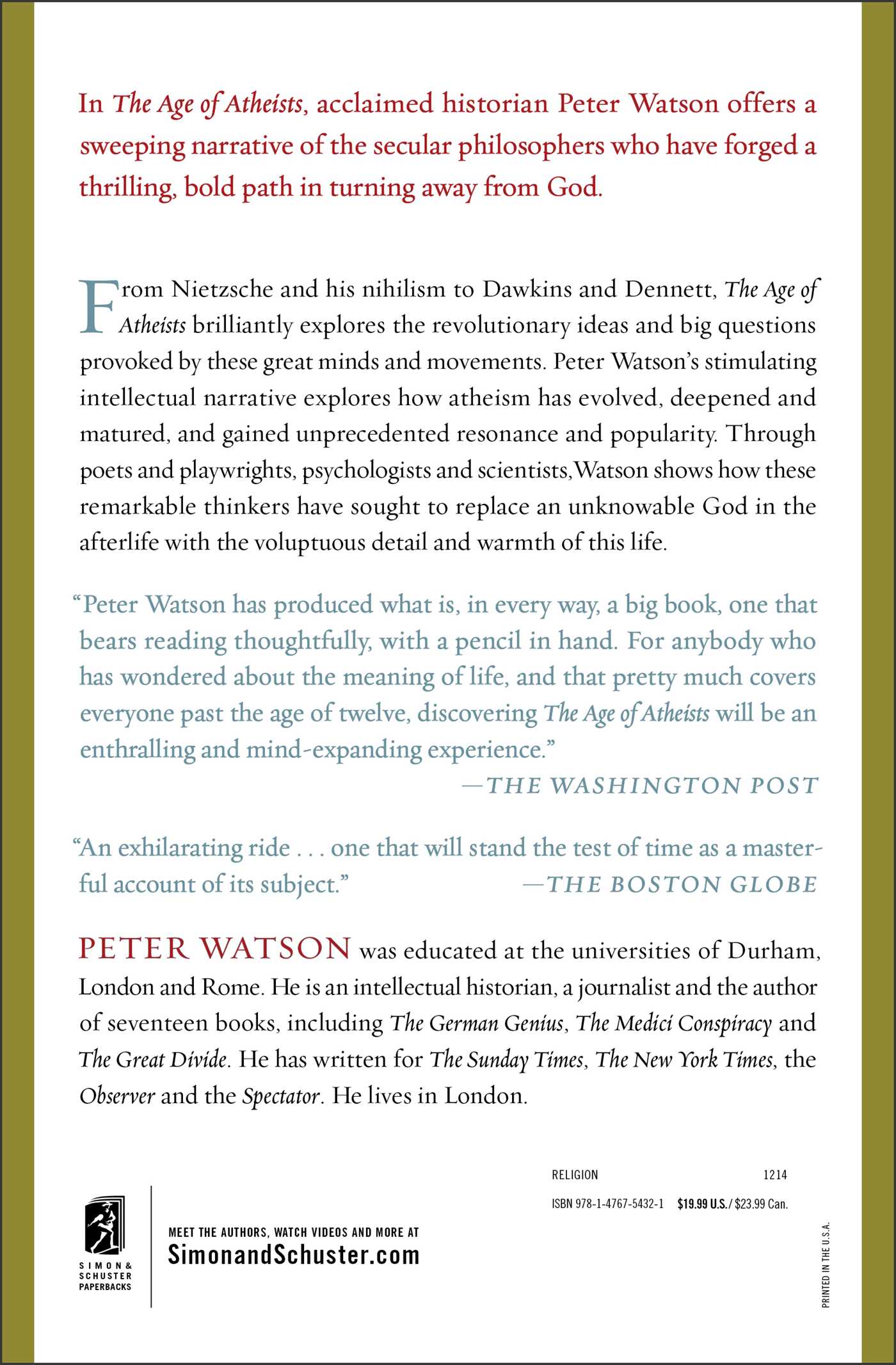

From one of England’s most distinguished intellectual historians comes “an exhilarating ride…that will stand the test of time as a masterful account of” (The Boston Globe) one of the West’s most important intellectual movements: Atheism.

In 1882, Friedrich Nietzche declared that “God is dead” and ever since tens of thousands of brilliant, courageous, thoughtful individuals have devoted their creative energies to devising ways to live without God with self-reliance, invention, hope, wit, and enthusiasm. Now, for the first time, their story is revealed.

A captivating story of contest, failure, and success, The Age of Atheists sweeps up William James and the pragmatists; Sigmund Freud and psychoanalysis; Pablo Picasso, James Joyce, and Albert Camus; the poets of World War One and the novelists of World War Two; scientists, from Albert Einstein to Stephen Hawking; and the rise of the new Atheists—Dawkins, Harris, and Hitchens. This is a story of courage, of the thousands of individuals who, sometimes at great risk, devoted tremendous creative energies to devising ways to fill a godless world with self-reliance, invention, hope, wit, and enthusiasm. Watson explains how atheism has evolved and reveals that the greatest works of art and literature, of science and philosophy of the last century can be traced to the rise of secularism.

From Nietzsche to Daniel Dennett, Watson’s stirring intellectual history manages to take the revolutionary ideas and big questions of these great minds and movements and explain them, making the connections and concepts simple without being simplistic. The Age of Atheists is “highly readable and immensely wide-ranging…For anybody who has wondered about the meaning of life…an enthralling and mind-expanding experience” (The Washington Post).

From one of England’s most distinguished intellectual historians comes “an exhilarating ride…that will stand the test of time as a masterful account of” (The Boston Globe) one of the West’s most important intellectual movements: Atheism.

In 1882, Friedrich Nietzche declared that “God is dead” and ever since tens of thousands of brilliant, courageous, thoughtful individuals have devoted their creative energies to devising ways to live without God with self-reliance, invention, hope, wit, and enthusiasm. Now, for the first time, their story is revealed.

A captivating story of contest, failure, and success, The Age of Atheists sweeps up William James and the pragmatists; Sigmund Freud and psychoanalysis; Pablo Picasso, James Joyce, and Albert Camus; the poets of World War One and the novelists of World War Two; scientists, from Albert Einstein to Stephen Hawking; and the rise of the new Atheists—Dawkins, Harris, and Hitchens. This is a story of courage, of the thousands of individuals who, sometimes at great risk, devoted tremendous creative energies to devising ways to fill a godless world with self-reliance, invention, hope, wit, and enthusiasm. Watson explains how atheism has evolved and reveals that the greatest works of art and literature, of science and philosophy of the last century can be traced to the rise of secularism.

From Nietzsche to Daniel Dennett, Watson’s stirring intellectual history manages to take the revolutionary ideas and big questions of these great minds and movements and explain them, making the connections and concepts simple without being simplistic. The Age of Atheists is “highly readable and immensely wide-ranging…For anybody who has wondered about the meaning of life…an enthralling and mind-expanding experience” (The Washington Post).

Product Details

- Publisher: Simon & Schuster (December 23, 2014)

- Length: 640 pages

- ISBN13: 9781476754321

Browse Related Books

Resources and Downloads

High Resolution Images

- Book Cover Image (jpg): The Age of Atheists Trade Paperback 9781476754321